Sunday, November 12, 2006

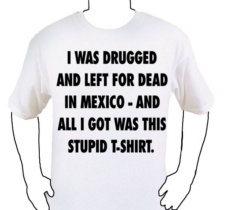

Stupid T-Shirt

How awesome is the internet? A little while ago, I was watching David Fincher's far-fetched but entertaining thriller, The Game. If you haven't seen the film, there are spoilers ahead.

At the end of the movie, some pretty unlikely things happen, but it's a lot of fun, and I think most audiences let it slide. One of the funny moments at the end is when a character gives Michael Douglas' character a t-shirt which describes his experiences. After watching the movie, I thought it would make a pretty funny t-shirt... but I couldn't remember exactly what the shirt said. Naturally, I turned to the internet. Not only was I able to figure out what it said (from multiple sites), I also found a site that actually sells the shirt.

They've even got a screenshot from the movie. Alas, it's a bit pricey for such a simplistic shirt. Still, the idea that such a shirt would be anything more than some custom thing a film nerd whipped up is pretty funny. I mean, how many people would even get the reference?

Sunday, November 05, 2006

Choice, Productivity and Feature Bloat

Jacob Neilson's recent column on productivity and screen size referenced an interesting study comparing a feature-rich application with a simpler one:

The distinction between operations and tasks is important in application design because the goal is to optimize the user interface for task performance, rather than sub-optimize it for individual operations. For example, Judy Olson and Erik Nilsen wrote a classic paper comparing two user interfaces for large data tables. One interface offered many more features for table manipulation and each feature decreased task-performance time in specific circumstances. The other design lacked these optimized features and was thus slower to operate under the specific conditions addressed by the first design's special features.In this case, more choices means less productive. So why aren't all of our applications much smaller and less feature-intesive? Well, as I went over a few weeks ago, people tend to overvalue measurable things like features and undervalue less tangible aspects like usability and productivity. Here's another reason we endure feature bloat:

So, which of these two designs was faster to use? The one with the fewest features. For each operation, the planning time was 2.9 seconds in the stripped-down design and 4.6 seconds in the feature-rich design. With more choices, it takes more time to make a decision on which one to use. The extra 1.7 seconds required to consider the richer feature set consumed more time than users saved by executing faster operations.

A lot of software developers are seduced by the old "80/20" rule. It seems to make a lot of sense: 80% of the people use 20% of the features. So you convince yourself that you only need to implement 20% of the features, and you can still sell 80% as many copies.That quote is from a relatively old article, and when I first read it, I still didn't get why you couldn't create a "lite" word processor that would be significantly smaller than Word, but still get the job done. Then I started using several of the more obscure features of Word, notably the "Track Changes" feature (which was a life saver at the time), which never would have made it into a "lite" version (yes, there are other options for collaborative editing these days, but you gotta use what you have at hand at the time). Add in the ever increasing computer power and ever decreasing cost of memory and storage, and feature bloat looks like less of a problem. However, as this post started out by noting, productivity often suffers as a result (and as Neilson's article shows, productivity is more difficult to measure than counting a list of features).

Unfortunately, it's never the same 20%. Everybody uses a different set of features. In the last 10 years I have probably heard of dozens of companies who, determined not to learn from each other, tried to release "lite" word processors that only implement 20% of the features. This story is as old as the PC.

The one approach for dealing with "featuritis" that seems to be catching on these days is starting with your "lite" version, then allowing people to install plugins to fill in the missing functionality. This is one of the things that makes Firefox so popular, as it not only allows plugins, it actually encourages users to create their own. Alas, this has lead to choice problems of it's own. One of my required features for any browser that I would consider for personal use is mouse gestures. Firefox has at least 4 extensions available that implement mouse gestures in one way or another (though it's not immediately obvious what the differences are, and there appear to be other extensions which utilize mouse gestures for other functions). By contrast, my other favorite browser, Opera, natively supports mouse gestures.

Of course, this is not a new approach to the feature bloat problem. Indeed, as far as I can see, this is one of the primary driving forces behind *nix-based applications. Their text editors don't have a word count feature because there is already a utility for doing so (command line: wc [filename]). And so on. It's part of *nix's modular design, and it's one of the things that makes it great, but it also presents problems of it's own (which I belabored at length last week)

In the end, it comes down to tradeoffs. Humans don't solve problems, they exchange problems, and so on. Right now, the plugin strategy seems to make a reasonable tradeoff, but it certainly isn't perfect.

Monday, October 30, 2006

Horror Movie Corner

Halloween is upon us once again, and since this is one of the few holidays in which I write something that is somewhat timely, I figure I should continue the tradition (and this year, I'll actually publish the post before Halloween). A few horror movies I've had the pleasure to view recently:

- The Texas Chain Saw Massacre (1974): I'd seen this once before, a long time ago, and was pretty well creeped out by it. About as pure an exercise in horror as is possible in a movie. As such, there are some who feel it lacks real purpose (and thus that it is a waste of talent), but it is so well executed that I can't help but love it and it didn't really lose any impact upon my second viewing this past weekend. It's got that gritty 70s horror feel, and you can almost feel the sweaty, grungy Texas setting. Speaking of which, it's interesting to note that what makes this movie so creepy is not just the freaky chainsaw-wielding maniac, it's that there really isn't anywhere to go. Unlike a lot of horror movies, there really isn't anywhere safe to run. And when our heroine is cornered and you think to yourself "Just jump out of the window, you moron," she actually proceeds to jump out the window. Of course, that only buys her a minute or so, but it's still a refreshing difference. It's obviously a low budget film, but it doesn't detract at all from the experience (and I think it contributes a little to the atmosphere too). It unfolds in a surprisingly realistic way, and that is part of why it is so effective. There's a ton more that could be said about this, but if you've never found yourself on the business end of a chainsaw or a meat hook, and you don't mind that it doesn't really seek to do anything deeper than creeping you out, it's worth watching. ***1/2

- Re-Animator (1985): In the 1980s, we started to see the emergence of horror films that were aware of how ridiculous they were, and even embracing the cheesyness in a humorous way. These films were less scary than they were funny, and Re-Animator is one of the better examples of this. It's a ton of fun, and it has taken on an added dimension of humor recently as one of the characters bears a striking resemblance to John Kerry. Heh. It's silly, it knows it's silly, and it's a lot of fun. **1/2

- Cabin Fever (2002): In a lot of ways, this movie starts off similar to The Texas Chainsaw Massacre. There's an element of realism in the setup, and the setting is a similar sort of desolate, helpless area. Alas, the tendency to wink at the audience and descend into gory meyhem gets the better of writer/director Eli Roth (who also made this year's squirm-inducing Hostel) and the movie becomes unhinged about halfway through. However, the ending (last 10-20 minutes or so) manages to transcend the cheesy gore as Roth somehow orchestrates a series of simultaneously idiotic and yet brilliant sequences. This ending kicks off with a car crashing into a deer, moves on to a harmonica... incident, has a nice shootout, and then goes into hyperdrive when Roth makes a joke involving a racist shopkeep and a rifle. Oh, and I almost forgot about the lemonade. It's completely ludicrous.

It reminded me of the ending of Mario Bava's Bay of Blood, a film notable mostly for it's inventive death sequences (many of which were lifted by Friday the 13th Part 2) and its totally unexpected and absurd ending involving two kids and a shotgun. Roth manages to capture this feeling several times as his film winds down, and that's actually pretty cool. In the end, it's not the greatest horror film out there, but if you don't mind movies that start realistically and then take the premise over the top as the film goes on, it might be worth checking out. **1/2

- Save it with the music: Wherein I discuss the role of music in horror films.

- Horror: Wherein I blather on and on about more obscure horror films and novels.

- Friday the 13th: Wherein Weasello hilariously reviews all of the movies in the Friday the 13th movie series.

- The Biology of B-Movie Monsters: Wherein someone takes B-Movies way too seriously. Still interesting though.

Sunday, October 29, 2006

Adventures in Linux, Paradox of Choice Edition

Last week, I wrote about the paradox of choice: having too many options often leads to something akin to buyer's remorse (paralysis, regret, dissatisfaction, etc...), even if their choice was ultimately a good one. I had attended a talk given by Barry Schwartz on the subject (which he's written a book about) and I found his focus on the psychological impact of making decisions fascinating. In the course of my ramblings, I made an offhand comment about computers and software:

... the amount of choices in assembling your own computer can be stifling. This is why computer and software companies like Microsoft, Dell, and Apple (yes, even Apple) insist on mediating the user's experience with their hardware & software by limiting access (i.e. by limiting choice). This turns out to be not so bad, because the number of things to consider really is staggering.The foolproofing that these companies do can sometimes be frustrating, but for the most part, it works out well. Linux, on the other hand, is the poster child for freedom and choice, and that's part of why it can be a little frustrating to use, even if it is technically a better, more stable operating system (I'm sure some OSX folks will get a bit riled with me here, but bear with me). You see this all the time with open source software, especially when switching from regular commercial software to open source.

One of the admirable things about Linux is that it is very well thought out and every design decision is usually done for a specific reason. The problem, of course, is that those reasons tend to have something to do with making programmers' lives easier... and most regular users aren't programmers. I dabble a bit here and there, but not enough to really benefit from these efficiencies. I learned most of what I know working with Windows and Mac OS, so when some enterprising open source developer decides that he doesn't like the way a certain Windows application works, you end up seeing some radical new design or paradigm which needs to be learned in order to use it. In recent years a lot of work has gone into making Linux friendlier for the regular user, and usability (especially during the installation process) has certainly improved. Still, a lot of room for improvement remains, and I think part of that has to do with the number of choices people have to make.

Let's start at the beginning and take an old Dell computer that we want to install Linux on (this is basically the computer I'm running right now). First question: which distrubution of Linux do we want to use? Well, to be sure, we could start from scratch and just install the Linux Kernel and build upwards from there (which would make the process I'm about to describe even more difficult). However, even Linux has it's limits, so there are lots of distrubutions of linux which package the OS, desktop environments, and a whole bunch of software together. This makes things a whole lot easier, but at the same time, there are a ton of distrutions to choose from. The distributions differ in a lot of ways for various reasons, including technical (issues like hardware support), philosophical (some distros poo poo commercial involvement) and organizational (things like support and updates). These are all good reasons, but when it's time to make a decision, what distro do you go with? Fedora? Suse? Mandriva? Debian? Gentoo? Ubuntu? A quick look at Wikipedia reveals a comparison of Linux distros, but there are a whopping 67 distros listed and compared in several different categories. Part of the reason there are so many distros is that there are a lot of specialized distros built off of a base distro. For example, Ubuntu has several distributions, including Kubuntu (which defaults to the KDE desktop environment), Edubuntu (for use in schools), Xubuntu (which uses yet another desktop environment called Xfce), and, of course, Ubuntu: Christian Edition (linux for Christians!).

So here's our first choice. I'm going to pick Ubuntu, primarily because their tagline is "Linux for Human Beings" and hey, I'm human, so I figure this might work for me. Ok, and it has a pretty good reputation for being an easy to use distro focused more on users than things like "enterprises."

Alright, the next step is to choose a desktop environment. Lucky for us, this choice is a little easier, but only because Ubuntu splits desktop environments into different distributions (unlike many others which give you the choice during installation). For those who don't know what I'm talking about here, I should point out that a desktop environment is basically an operating system's GUI - it uses the desktop metaphor and includes things like windows, icons, folders, and abilities like drag-and-drop. Microsoft Windows and Mac OSX are desktop environments, but they're relatively locked down (to ensure consistency and ease of use (in theory, at least)). For complicated reasons I won't go into, Linux has a modular system that allows for several different desktop environments. As with linux distributions, there are many desktop environments. However, there are really only two major players: KDE and Gnome. Which is better appears to be a perennial debate amongst linux geeks, but they're both pretty capable (there are a couple of other semi-popular ones like Xfce and Enlightenment, and then there's the old standby, twm (Tom's Window Manager)). We'll just go with the default Gnome installation.

Note that we haven't even started the installation process and if we're a regular user, we've already made two major choices, each of which will make you wonder things like: Would I have this problem if I installed Suse instead of Ubuntu? Is KDE better than Gnome?

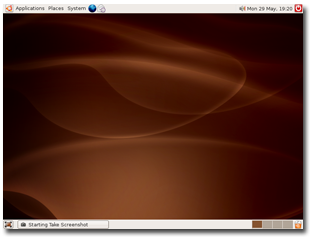

But now we're ready for installation. This, at least, isn't all that bad, depending on the computer you're starting with. Since we're using an older Dell model, I'm assuming that the hardware is fairly standard stuff and that it will all be supported by my distro (if I were using a more bleeding edge type box, I'd probably want to check out some compatibility charts before installing). As it turns out, Ubuntu and it's focus on creating a distribution that human beings can understand has a pretty painless installation. It was actually a little easier than Windows, and when I was finished, I didn't have to remove the mess of icons and trial software offers (purchasing a Windows PC through somone like HP is apparently even worse). When you're finished installing Ubuntu, you're greeted with a desktop that looks like this (click the pic for a larger version):

No desktop clutter, no icons, no crappy trial software. It's beautiful! It's a little different from what we're used to, but not horribly so. Windows users will note that there are two bars, one on the top and one on the bottom, but everything is pretty self explanatory and this desktop actually improves on several things that are really strange about Windows (i.e. to turn off you're computer, first click on "Start!"). Personally, I think having two toolbars is a bit much so I get rid of one of them, and customize the other so that it has everything I need (I also put it at the bottom of the screen for several reasons I won't go into here as this entry is long enough as it is).

Alright, we're almost homefree, and the installation was a breeze. Plus, lots of free software has been installed, including Firefox, Open Office, and a bunch of other good stuff. We're feeling pretty good here. I've got most of my needs covered by the default software, but let's just say we want to install Amarok, so that we can update our iPod. Now we're faced with another decision: How do we install this application? Since Ubuntu has so thoughtfully optimized their desktop for human use, one of the things we immediately notice in the "Applications" menu is an option which says "Add/Remove..." and when you click on it, a list of software comes up and it appears that all you need to do is select what you want and it will install it for you. Sweet! However, the list of software there doesn't include every program, so sometimes you need to use the Synaptic package manager, which is also a GUI application installation program (though it appears to break each piece of software into smaller bits). Also, in looking around the web, you see that someone has explained that you should download and install software by typing this in the command line: apt-get install amarok. But wait! We really should be using the aptitude command instead of apt-get to install applications.

If you're keeping track, that's four different ways to install a program, and I haven't even gotten into repositories (main, restricted, universe, multiverse, oh my!), downloadable package files (these operate more or less the way a Windows user would download a .exe installation file, though not exactly), let alone downloading the source code and compiling (sounds fun, doesn't it?). To be sure, they all work, and they're all pretty easy to figure out, but there's little consistency, especially when it comes to support (most of the time, you'll get a command line in response to a question, which is completely at odds with the expectations of someone switching from Windows). Also, in the case of Amarok, I didn't fare so well (for reasons belabored in that post).

Once installed, most software works pretty much the way you'd expect. As previously mentioned, open source developers sometimes get carried away with their efficiencies, which can sometimes be confusing to a newbie, but for the most part, it works just fine. There are some exceptions, like the absurd Blender, but that's not necessarily a hugely popular application that everyone needs.

Believe it or not, I'm simplifying here. There are that many choices in Linux. Ubuntu tries its best to make things as simple as possible (with considerable success), but when using Linux, it's inevitable that you'll run into something that requires you to break down the metaphorical walls of the GUI and muck around in the complicated swarm of text files and command lines. Again, it's not that difficult to figure this stuff out, but all these choices contribute to the same decision fatigue I discussed in my last post: anticipated regret (there are so many distros - I know I'm going to choose the wrong one), actual regret (should I have installed Suse?), dissatisfaction, excalation of expectations (I've spent so much time figuring out what distro to use that it's going to perfectly suit my every need!), and leakage (i.e. a bad installation process will affect what you think of a program, even after installing it - your feelings before installing leak into the usage of the application).

None of this is to say that Linux is bad. It is free, in every sense of the word, and I believe that's a good thing. But if they ever want to create a desktop that will rival Windows or OSX, someone needs to create a distro that clamps down on some of these choices. Or maybe not. It's hard to advocate something like this when you're talking about software that is so deeply predicated on openess and freedom. However, as I concluded in my last post:

Without choices, life is miserable. When options are added, welfare is increased. Choice is a good thing. But too much choice causes the curve to level out and eventually start moving in the other direction. It becomes a matter of tradeoffs. Regular readers of this blog know what's coming: We don't so much solve problems as we trade one set of problems for another, in the hopes that the new set of problems is more favorable than the old.Choice is a double edged sword, and by embracing that freedom, Linux has to deal with the bad as well as the good (just as Microsoft and Apple have to deal with the bad aspects of suppressing freedom and choice). Is it possible to create a Linux distro that is as easy to use as Windows or OSX while retaining the openness and freedom that makes it so wonderful? I don't know, but it would certainly be interesting.

Sunday, October 22, 2006

The Paradox of Choice

At the UI11 Conference I attended last week, one of the keynote presentations was made by Barry Schwartz, author of The Paradox of Choice: Why More Is Less. Though he believes choice to be a good thing, his presentation focused more on the negative aspects of offering too many choices. He walks through a number of examples that illustrate the problems with our "official syllogism" which is:

- More freedom means more welfare

- More choice means more freedom

- Therefore, more choice means more welfare

So how do we react to all these choices? Luke Wroblewski provides an excellent summary, which I will partly steal (because, hey, he's stealing from Schwartz after all):

- Paralysis: When faced with so many choices, people are often overwhelmed and put off the decision. I often find myself in such a situation: Oh, I don't have time to evaluate all of these options, I'll just do it tomorrow. But, of course, tomorrow is usually not so different than today, so you see a lot of procrastination.

- Decision Quality: Of course, you can't procrastinate forever, so when forced to make a decision, people will often use simple heuristics to evaluate the field of options. In retail, this often boils down to evaluation based mostly on Brand and Price. I also read a recent paper on feature fatigue (full article not available, but the abstract is there) that fits nicely here.

In fields where there are many competing products, you see a lot of feature bloat. Loading a product with all sorts of bells and whistles will differentiate that product and often increase initial sales. However, all of these additional capabilities come at the expense of usability. What's more, even when people know this, they still choose high-feature models. The only thing that really helps is when someone actually uses a product for a certain amount of time, at which point they realize that they either don't use the extra features or that the tradeoffs in terms of usability make the additional capabilities considerably less attractive. Part of the problem is perhaps that usability is an intangible and somewhat subjective attribute of a product. Intellectually, everyone knows that it is important, but when it comes down to decision-time, most people base their decisions on something that is more easily measured, like number of features, brand, or price. This is also part of why focus groups are so bad at measuring usability. I've been to a number of focus groups that start with a series of exercises in front of a computer, then end with a roundtable discussion about their experiences. Usually, the discussion was completely at odds with what the people actually did when in front of the computer. Watch what they do, not what they say... - Decision Satisfaction: When presented with a lot of choices, people may actually do better for themselves, yet they often feel worse due to regret or anticipated regret. Because people resort to simplifying their decision making process, and because they know they're simplifying, they might also wonder if one or more of the options they cut was actually better than what they chose. A little while ago, I bought a new cell phone. I actually did a fair amount of work evaluating the options, and I ended up going with a low-end no-frills phone... and instantly regretted it. Of course, the phone itself wasn't that bad (and for all I know, it was better than the other phones I passesd over), but I regret dismissing some of the other options, such as the camera (how many times over the past two years have I wanted to take a picture and thought Hey, if I had a camera on my phone I could have taken that picture!)

- Escalation of expectations: When we have so many choices and we do so much work evaluating all the options, we begin to expect more. When things were worse (i.e. when there were less choices), it was much easier to exceed expectations. In the cell phone example above, part of the regret was no doubt fueled by the fact that I spent a lot of time figuring out which phone to get.

- Maximizer Impact: There are some people who always want to have the best, and the problems inherent in too many choices hit these people the hardest.

- Leakage: The conditions present when you're making a decision exert influence long after the decision has actually been made, contributing to the dissatisfaction (i.e. regret, anticipated regret) and escalation of expectations outlined above.

Another example is my old PC which has recently kicked the bucket. I actually assembled that PC from a bunch of parts, rather than going through a mainstream company like Dell, and the number of components available would probably make the Circuit City stereo example I gave earlier look tiny by comparison. Interestingly, this diversity of choices for PCs is often credited as part of the reason PCs overtook Macs:

Back in the early days of Macintoshes, Apple engineers would reportedly get into arguments with Steve Jobs about creating ports to allow people to add RAM to their Macs. The engineers thought it would be a good idea; Jobs said no, because he didn't want anyone opening up a Mac. He'd rather they just throw out their Mac when they needed new RAM, and buy a new one.But as Schwartz would note, the amount of choices in assembling your own computer can be stifling. This is why computer and software companies like Microsoft, Dell, and Apple (yes, even Apple) insist on mediating the user's experience with their hardware by limiting access (i.e. by limiting choice). This turns out to be not so bad, because the number of things to consider really is staggering. So why was I so happy with my computer? Because I really didn't make many of the decisions - I simply went over to Ars Technica's System Guide and used their recommendations. When it comes time to build my next computer, what do you think I'm going to do? Indeed, Ars is currently compiling recommendations for their October system guide, due out sometime this week. My new computer will most likely be based off of their "Hot Rod" box. (Linux presents some interesting issues in this context as well, though I think I'll save that for another post.)

Of course, we know who won this battle. The "Wintel" PC won: The computer that let anyone throw in a new component, new RAM, or a new peripheral when they wanted their computer to do something new. Okay, Mac fans, I know, I know: PCs also "won" unfairly because Bill Gates abused his monopoly with Windows. Fair enough.

But the fact is, as Hill notes, PCs never aimed at being perfect, pristine boxes like Macintoshes. They settled for being "good enough" -- under the assumption that it was up to the users to tweak or adjust the PC if they needed it to do something else.

So what are the lessons here? One of the big ones is to separate the analysis from the choice by getting recommendations from someone else (see the Ars Technica example above). In the market for a digital camera? Call a friend (preferably one who is into photography) and ask them what to get. Another thing that strikes me is that just knowing about this can help you overcome it to a degree. Try to keep your expectations in check, and you might open up some room for pleasant surprises (doing this is suprisingly effective with movies). If possible, try using the product first (borrow a friend's, use a rental, etc...). Don't try to maximize the results so much; settle for things that are good enough (this is what Schwartz calls satisficing).

Without choices, life is miserable. When options are added, welfare is increased. Choice is a good thing. But too much choice causes the curve to level out and eventually start moving in the other direction. It becomes a matter of tradeoffs. Regular readers of this blog know what's coming: We don't so much solve problems as we trade one set of problems for another, in the hopes that the new set of problems is more favorable than the old. So where is the sweet spot? That's probably a topic for another post, but my initial thoughts are that it would depend heavily on what you're doing and the context in which you're doing it. Also, if you were to take a wider view of things, there's something to be said for maximizing options and then narrowing the field (a la the free market). Still, the concept of choice as a double edged sword should not be all that surprising... after all, freedom isn't easy. Just ask Spider Man.

Wednesday, October 18, 2006

Bowling + Rollercoasters = Fun

Sweet merciful crap:

I think I peed a little. Nice work, Shamus.

Sunday, October 15, 2006

Link Dump

I've been quite busy lately so once again it's time to unleash the chain-smoking monkey research squad and share the results:

- The Truth About Overselling!: Ever wonder how web hosting companies can offer obscene amounts of storage and bandwidth these days? It turns out that these web hosting companies are offering more than they actually have. Josh Jones of Dreamhost explains why this practice is popular and how they can get away with it (short answer - most people emphatically don't use or need that much bandwidth).

- Utterly fascinating pseudo-mystery on Metafilter. Someone got curious about a strange flash advertisement, and a whole slew of people started investigating, analyzing the flash file, plotting stuff on a map, etc... Reminded me a little of that whole Publius Enigma thing [via Chizumatic].

- Weak security in our daily lives: "Right now, I am going to give you a sequence of minimal length that, when you enter it into a car's numeric keypad, is guaranteed to unlock the doors of said car. It is exactly 3129 keypresses long, which should take you around 20 minutes to go through." [via Schneier]

- America's Most Fonted: The 7 Worst Fonts: Fonts aren't usually a topic of discussion here, but I thought it was funny that the Kaedrin logo (see upper left hand side of this page) uses the #7 worst font. But it's only the logo and that's ok... right? RIGHT?

- Architecture is another topic rarely discussed here, but I thought that the new trend of secret rooms was interesting. [via Kottke]

Sunday, October 08, 2006

Linux Humor & Blog Notes

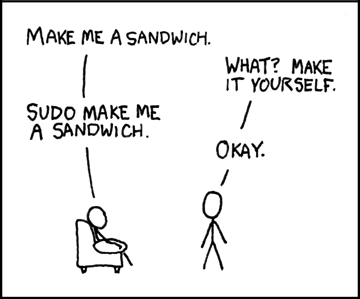

I'll be attending the User Interface 11 conference this week, and as such, won't have much time to check in. Try not to wreck the place while I'm gone. Since I'm off to the airport in fairly short order (why did I schedule a flight to conflict with the Eagles/Cowboys matchup? Dammit!) here's a quick comic with some linux humor:

The author, Randall Munroe, is a NASA scientist who has a keen sense of humor (and is apparently deathly afraid of raptors) and publishes a new comic a few times a week. The comic above is one of his most popular, and even graces one of his T-Shirts (I also like the "Science. It works, bitches." shirt)

I'm sure I'll be able to wrangle some internet access during the week, but chances are that it will be limited (I need to get me a laptop at some point). I'll be back late Thursday night, so posting will probably resume next Sunday.